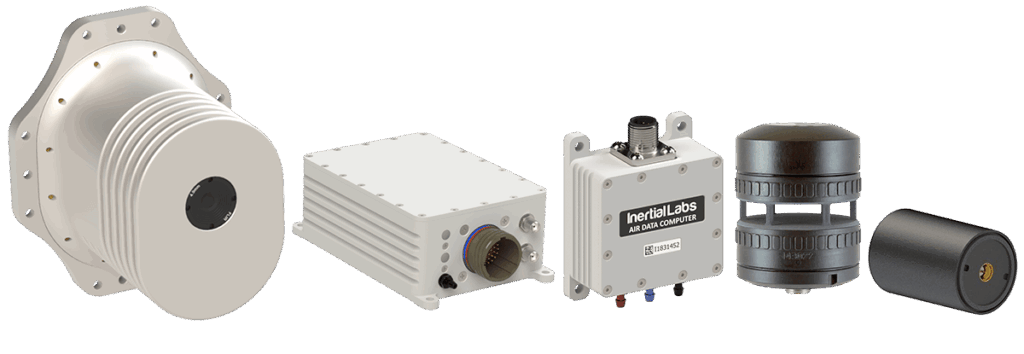

Inertial Labs has released an article that outlines how sensor fusion and Kalman filtering are used in the company’s inertial systems, such as AHRS (attitude and heading reference systems) and INS (inertial navigation systems). The article also explains various applications of these systems, such as autonomous navigation for UAVs (unmanned aerial vehicles) and UGVs (unmanned ground vehicles), remote sensing and antenna pointing.

Inertial Labs has released an article that outlines how sensor fusion and Kalman filtering are used in the company’s inertial systems, such as AHRS (attitude and heading reference systems) and INS (inertial navigation systems). The article also explains various applications of these systems, such as autonomous navigation for UAVs (unmanned aerial vehicles) and UGVs (unmanned ground vehicles), remote sensing and antenna pointing.

Sensor fusion is the ability to bring together inputs from multiple sensors in order to produce a single model that provides more accurate results than the use of the individual inputs alone. The article outlines the three fundamental methods of sensor fusion: redundant sensors, complementary sensors and coordinated sensors.

Kalman filters utilize a series of observed measurements over time, which may contain statistical noise and other inaccuracies that could cause sensor outputs to be skewed over time. The filter produces estimates of these unknown variables, which tend to be more accurate than data recorded by the sensors alone, calculates their certainty, and puts the collected data into a weighted average.

Inertial Labs’ OptoAHRS-II is an optically-enhanced AHRS that uses reference images, such as pictures of the horizon or nearby objects in the given direction, to identify a constellation of visual features. Any subsequent images can be used to determine the heading by

comparing with the appropriate reference image. This data is incorporated into the device’s sensor fusion solution, providing resilience against changes in magnetic interference presented by the environment.

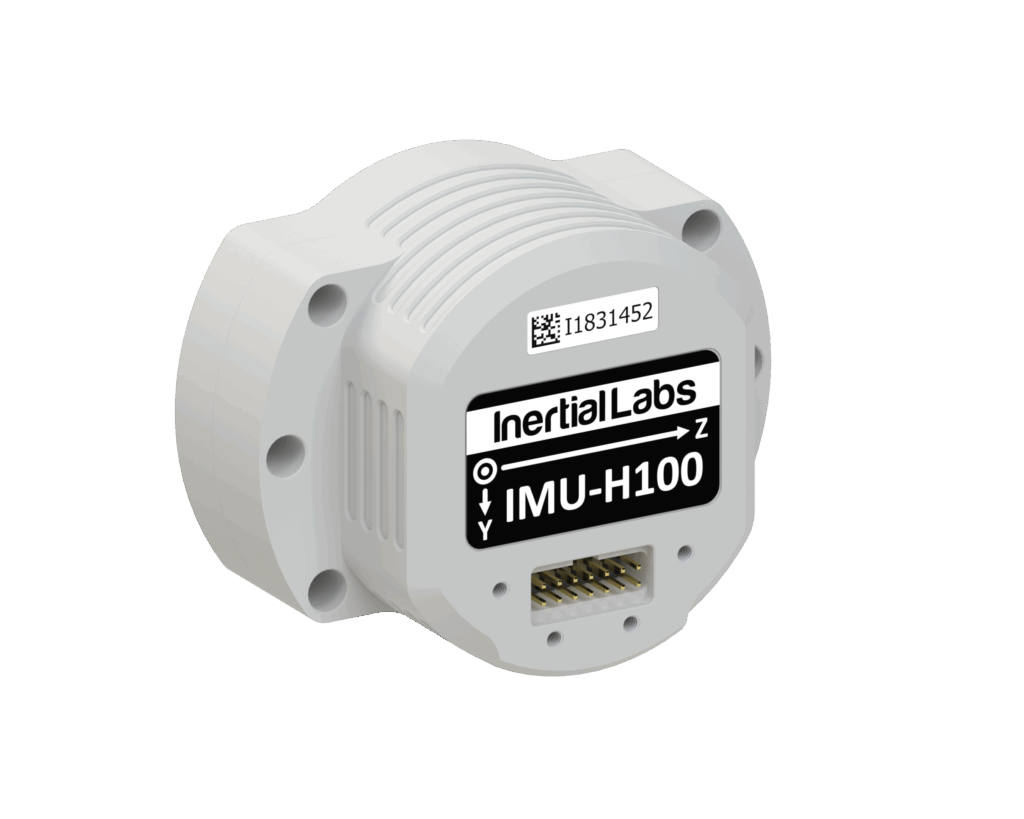

The INS-P inertial navigation system also uses sensor fusion to determine the position, velocity and orientation of a system in a 3D space. Inputs from magnetometers, MEMS gyroscopes, accelerometers and GPS are fed into a Kalman filter to provide estimates.

To find out more about sensor fusion and Kalman filtering for inertial systems, read the full article here.